In this series, we go behind-the-screens and share how data flows into the Bench platform seamlessly from a range of different sources. We’ll describe the process of data monitoring and how validation tools can help marketers gather actionable insights from their campaigns.

Our development team is at the helm of the platform, continuously finding innovative solutions for our clients and team through the platform, and they work everyday to ensure it is a well-oiled machine.

How do they do this? In part 1, we hear from our Director of Technology, David Cameron, about the systems the team has put in place to make sure data is imported smoothly. Essentially, we get a glimpse into a day in the life of the development team.

—

At Bench, we import data from over 20 different sources to report on all of our campaigns for our customers. This is updated daily so our customers can make strategic decisions. What goes on behind the scenes to make sure this all works?

The short answer: we monitor everything at each stage and get notifications when anything breaks.

We’re always watching everything to make sure nothing goes wrong.

How it all works

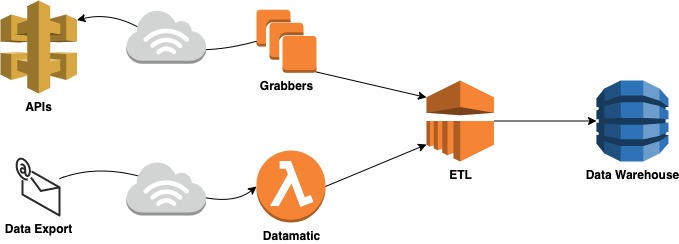

First, a quick introduction to how we import all the different data sources into the platform. It’s a complex system, and involves us pulling data from multiple sources into one centralised space. This allows for our customers to view all the relevant data and to make strategic decisions in an instant:

The ‘grabbers’: pulling data from Facebook, DV360 and The Trade Desk

We have some services we call grabbers, which connect to the APIs. At the first stage, we have alerts if the grabbers fail for any reason. Sometimes these trigger because of an issue on the DSP side, but often this is just a short-term issue that goes away, and the next import works fine. We get notified each time this fails and investigate in case.

Datamatic: preparing data from other platforms to be processed

We have an internal tool called Datamatic, which we use to transform data from many of the platforms into one common format. If Datamatic fails for any reason, this will generate notifications. We monitor this in a couple of different ways, just to make sure we get told when something has broken.

Recently, we had a problem with the process of deploying Datamatic. The monitoring we have in place meant that we were notified about the problem as soon as it happened. We had time to investigate and fix the issue, without any of our users noticing the minor blip.

ETL: processing the data

Things can also fail as we process the data in our Extract Transform Load (ETL) process. To provide another example here, we’ve had an intermittent issue where DV360 doesn’t provide us with the latest version of a report. It’s a problem on Google’s side and it has corrected itself, but it’s another way we’ve been notified of a problem which we’ve investigated.

End-to-end checks

Lastly, what we really care about is that everything works end-to-end. It’s not much help if the individual steps all worked, but all the pieces didn’t work together. Or if one of the pieces failed without sending a notification, such as a grabber failing silently. So we also run checks every day to make sure we have the latest data from each platform.

These are customised for each platform, so that we know as soon as possible if we’re missing data from any of the platforms.

Putting it all together

With all of this in place, we know when each process of the individual parts of the data import happen. We also know when we don’t have data for any one of the platforms we support.

To give you an idea of some of the notifications we’ve had in the past few weeks:

- Notification of errors in Datamatic, related to a deployment. Fixed and deployed later that day.

- Notification from Facebook, The Trade Desk and DV360 ETL. This turned out to be a network connectivity issue for Amazon Web Services (AWS), which resolved itself.

And that’s how we keep the machine running smoothly.

Want to know more? We’d love to answer all your questions about the Bench Platform and how it works and to power marketing teams.